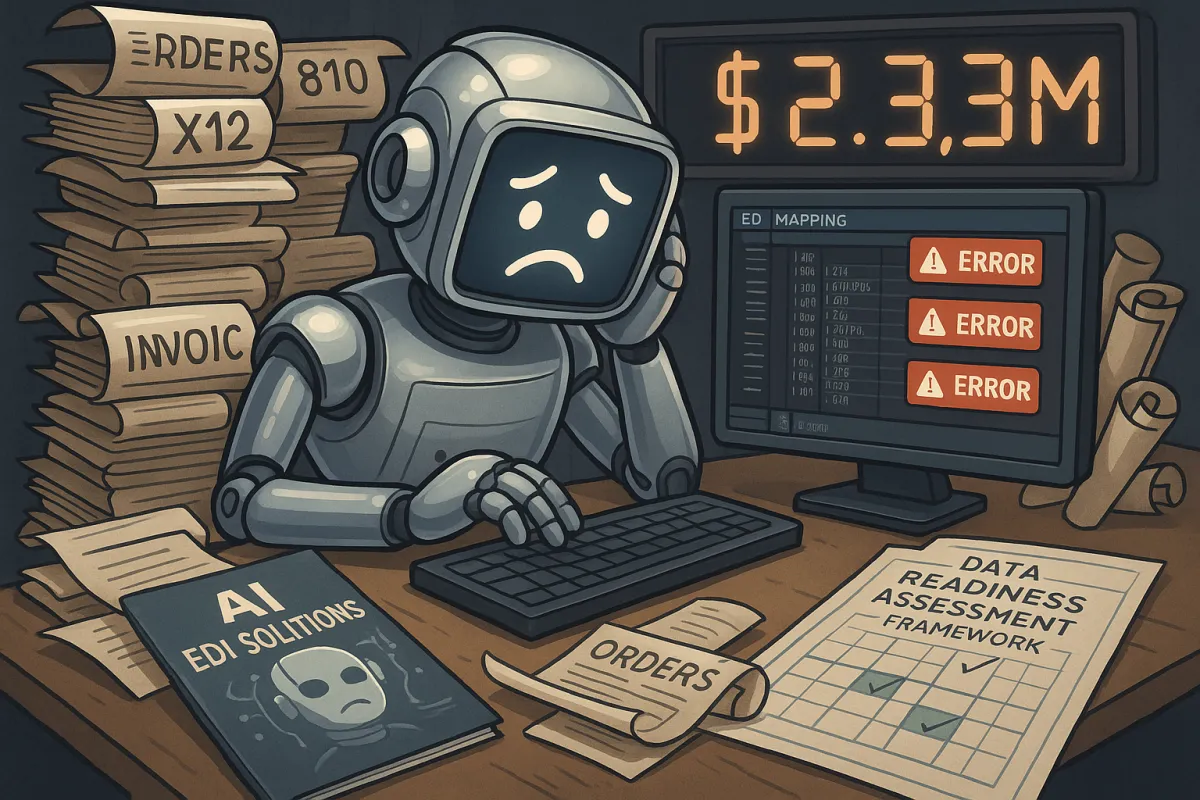

The Hidden EDI Data Quality Crisis That's Breaking 85% of AI Mapping Projects: Your Complete Data Readiness Assessment Framework to Prevent $2.3M Implementation Failures in 2026

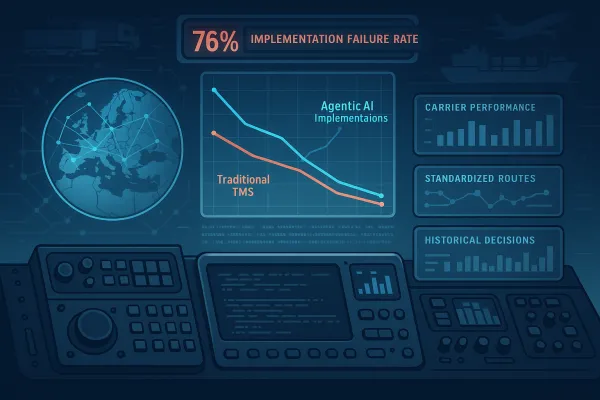

Your AI-powered EDI mapping project is about to become a $2.3M statistics casualty. RAND Corporation's comprehensive analysis shows that over 80% of AI projects fail to reach meaningful production deployment, and S&P Global's 2025 survey reveals that 42% of companies abandoned most of their AI initiatives this year, up from just 17% in 2024. The vendors selling you AI EDI mapping automation aren't telling you about the hidden data quality crisis destroying these implementations before they start.

While AI can be trained on vast libraries of EDI standards (X12, EDIFACT), XML schemas, JSON formats, and API specifications, acting as an intelligent mapping assistant or autonomous mapping engine, the reality is more sobering. The overwhelming majority of AI project failures trace back to the same root cause: the data foundation was not ready, and Informatica's 2025 CDO Insights survey identified data quality and readiness as the top obstacle to AI success, cited by 43% of respondents.

The AI EDI Mapping Promise vs. Reality Gap

EDI vendors are marketing AI-powered mapping as the solution to your most painful integration challenges. The integration of Generative AI into EDI ecosystems moves the needle from automated data exchange to intelligent process orchestration, and forward thinking companies are automating EDI processes with integrated AI, with implementation beginning by analyzing immediate use cases like mapping, error resolution, or onboarding to recognize immediate ROI.

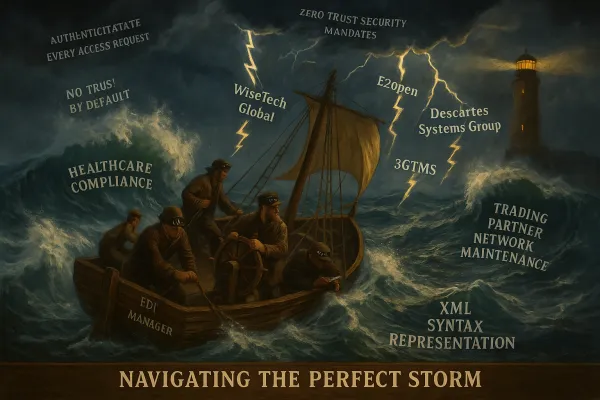

Here's what they're not mentioning: Gartner predicts that through 2026, organizations will abandon 60% of AI projects unsupported by AI-ready data. Your EDI environment, with its fragmented partner data, legacy system inconsistencies, and decades of accumulated technical debt, represents exactly the type of data foundation that makes AI fail.

Major platforms like IBM Sterling, Cleo, and Cargoson are all integrating AI capabilities into their EDI solutions, but starting AI projects before fixing the data foundation is possible but significantly increases the risk of failure, with small isolated proof-of-concepts using cleaned sample data potentially demonstrating technical feasibility while stalling during production deployment against real enterprise data.

The Data Quality Bottleneck Nobody Talks About

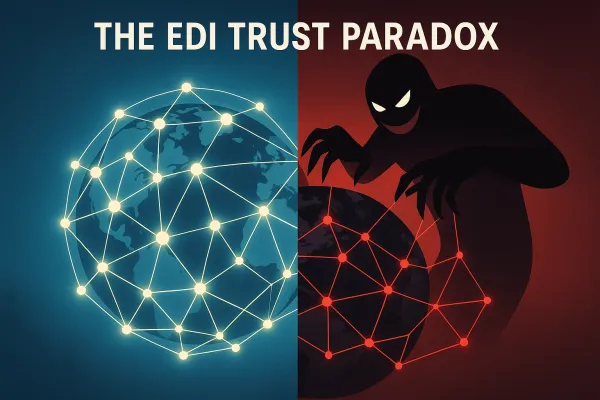

Data quality directly determines AI and machine learning outcomes because models learn from the data they're given, with inconsistent definitions, missing historical records, format mismatches, and ungoverned data access all introducing noise that degrades model accuracy.

In EDI environments, this translates to brutal realities that your vendors won't discuss during demos. A purchase order might have an invalid product code, an invoice might not match the original PO, or a data format might be slightly off-spec - exceptions that typically halt the automated process and require human intervention to investigate and resolve, creating a major bottleneck.

According to Gartner, poor data quality costs companies an average of $12.9 million per year, but in AI-powered EDI projects, the financial impact multiplies. Your data governance practices need to be bulletproof before AI deployment, requiring "strong data governance practices and a clear strategy for capturing, validating, and organizing EDI data before deploying AI solutions."

Modern TMS vendors including MercuryGate, Descartes, and Cargoson are all facing similar challenges when integrating AI capabilities with existing EDI infrastructures. The technical promise exists, but the data foundation requirements are more demanding than most organizations anticipate.

The Five Critical EDI Data Quality Assessment Areas

Before implementing AI EDI mapping, your organization needs to pass these five data readiness checkpoints that determine project success:

Partner Data Standardization: Each trading partner might have unique requirements or use slightly different interpretations of standards, with creating and maintaining these maps requiring deep technical knowledge of both EDI standards and the internal data structures of enterprise systems. You need consistent data formats across your entire trading network before AI can effectively map between them.

Historical Transaction Completeness: 80% of data scientists' time is spent preparing rather than analyzing data, and incomplete historical records prevent AI from learning accurate mapping patterns. Your transaction history needs to be complete, clean, and consistently formatted.

Semantic Mapping Consistency: The biggest hidden challenge is that "the same term can be interpreted differently by other organizations." AI mapping requires semantic consistency - the same business concept must be represented identically across all your systems and partner relationships.

Real-time Data Validation Capability: To ensure data integrity, businesses can implement data validation and cleansing at entry points, with machine learning identifying and correcting recurring issues, improving overall data quality. Your validation rules need to catch data quality issues before they reach AI processing.

Integration Point Data Integrity: Only 28% of enterprise applications are integrated despite averaging 897 apps, with the massive integration gap creating data silos that prevent organizations from leveraging their full data assets, and 95% of IT leaders reporting this impedes AI adoption and digital transformation initiatives.

Platforms like nShift, Transporeon, and Cargoson each handle these requirements differently, with varying levels of built-in data quality validation and partner standardization capabilities.

Warning Signs Your EDI Data Isn't AI-Ready

You'll know your data quality isn't sufficient for AI mapping when these patterns emerge during implementation:

Manual Data Cleaning Dominates Implementation: Data pipeline development creates significant delays, with 78% of teams facing challenges with data orchestration and tool complexity, pipeline development taking up to 12 weeks, 79% having undocumented data pipelines, while 57% report business needs change before integration requests are fulfilled. If 60-80% of your implementation time involves cleaning data rather than configuring AI, your foundation isn't ready.

Exception Handling Bottlenecks: Even in mature EDI environments, exceptions happen - a purchase order might have an invalid product code, an invoice might not match the original PO, or a data format might be slightly off-spec, with these exceptions typically halting the automated process and requiring human intervention. AI can't handle what humans struggle to resolve consistently.

Semantic Data Model Conflicts: When your trading partners use identical document structures but different business meanings for the same fields, AI mapping will produce technically correct but business-meaningless results. This is particularly challenging when working with carriers across different regions or industries.

Enterprise solutions including IBM Sterling and mid-market platforms like Cargoson each offer different approaches to handling these data quality challenges, but none can solve fundamental data governance problems through technology alone.

The Complete EDI Data Readiness Framework

Your organization needs a systematic approach to assess AI readiness before vendor selection begins. A data quality assessment framework provides a structured and repeatable process to evaluate, monitor and improve data quality.

Start with a comprehensive data audit following assessment that identifies areas of inconsistency and measures the extent of incomplete or inaccurate data, helping prioritize where improvements are needed most. This includes profiling your EDI transaction history, mapping semantic variations across trading partners, and documenting current exception handling processes.

Establish measurable data quality goals using the SMART framework. Examples include reducing data errors by a specific percentage by Q4 2025, achieving 100% completeness in critical customer records by mid-year, and implementing automated data quality checks for all new entries by Q3.

Deploy incremental AI validation through a phased approach that maintains your existing workflows as fallbacks. Start with a single EDI document type, implement AI mapping for one trading partner relationship, and gradually expand only after achieving consistent accuracy benchmarks.

Modern TMS providers handle this differently: Cargoson focuses on European manufacturers with standardized carrier integrations, while global platforms require more extensive data standardization efforts across diverse partner networks.

Implementation Success Metrics That Actually Matter

Forget vendor promises about "seamless integration" and focus on measurable outcomes that predict long-term success. Your metrics framework should include specific performance thresholds: "All transactions processed within 60 minutes" or "Reduce file format related errors by 60%."

Top AI leaders achieve $10.3x ROI through advanced data integration, with IDC's 2024 research of 4,000+ business leaders showing companies with strong integration achieving average $3.7x ROI from AI, organizations realizing value within 13 months, and 29% of AI leaders implementing solutions in less than 3 months, with the correlation between integration maturity and AI success undeniable across all industries studied.

Track data quality improvement benchmarks separately from AI implementation metrics. Monitor exception rates, manual intervention frequency, and semantic mapping accuracy as leading indicators of AI readiness rather than lagging indicators of project problems.

Cost-benefit analysis should account for both implementation expenses and avoided failure costs. The average paper requisition to order costs a company $37.45 in North America, while with an EDI requisition to order, costs are reduced to $23.83, with the savings being clear but the barrier being getting there.

The 2026 Action Plan: Building AI-Ready EDI Infrastructure

You have a narrow window to prepare before AI EDI mapping becomes table stakes rather than competitive advantage. Start with immediate data quality assessment using established frameworks rather than jumping into AI implementation.

Your vendor evaluation criteria must prioritize data handling capabilities over feature lists. Look for platforms that offer transparent data quality scoring, built-in validation frameworks, and proven integration patterns with your existing TMS/ERP systems.

Consider a modernization approach that embraces "hybrid EDI+API integration to avoid ripping or replacing technology" while building toward "automated onboarding and exception handling." This strategy allows you to improve data quality incrementally while maintaining operational continuity.

When evaluating solutions, consider Cargoson alongside established platforms like IBM Sterling, Cleo, and TrueCommerce. Each vendor handles AI integration differently, but the winners will be those that solve data quality challenges before implementing artificial intelligence, not after.

The companies that succeed with AI EDI mapping in 2026 won't be the first to implement - they'll be the ones that built bulletproof data foundations first. Your $2.3M implementation budget is safer invested in data quality infrastructure than cutting-edge AI features that can't overcome poor data governance.