The Critical AI-Powered EDI Vendor Evaluation Framework: How to Prevent the 95% Implementation Failure Rate by Assessing Accountable AI Capabilities and Production Readiness Before Procurement in 2026

Jitterbit recently announced its EDI AI Assistant, leveraging natural language processing to democratize EDI management, but this launch represents just the tip of a much larger challenge. 95% of enterprise AI pilots fail to deliver measurable business value, representing the clearest manifestation of what researchers call the "GenAI Divide". For supply chain professionals evaluating AI-powered EDI platforms, this failure rate isn't just a statistic – it's a warning that traditional vendor evaluation approaches won't work.

The problem isn't the technology itself. Purchasing AI tools from specialized vendors and building partnerships succeed about 67% of the time, while internal builds succeed only one-third as often. Yet most EDI vendor evaluations still focus on demo performance and feature checklists rather than the accountable AI capabilities that separate successful implementations from expensive failures.

The Accountable AI Gap in EDI Vendor Assessments

Here's what most procurement teams miss: Jitterbit's announcement builds upon their ISO/IEC 42001 certification, the international standard for AI management systems. This isn't marketing fluff – it's the difference between AI that works in demos and AI that delivers production value at scale.

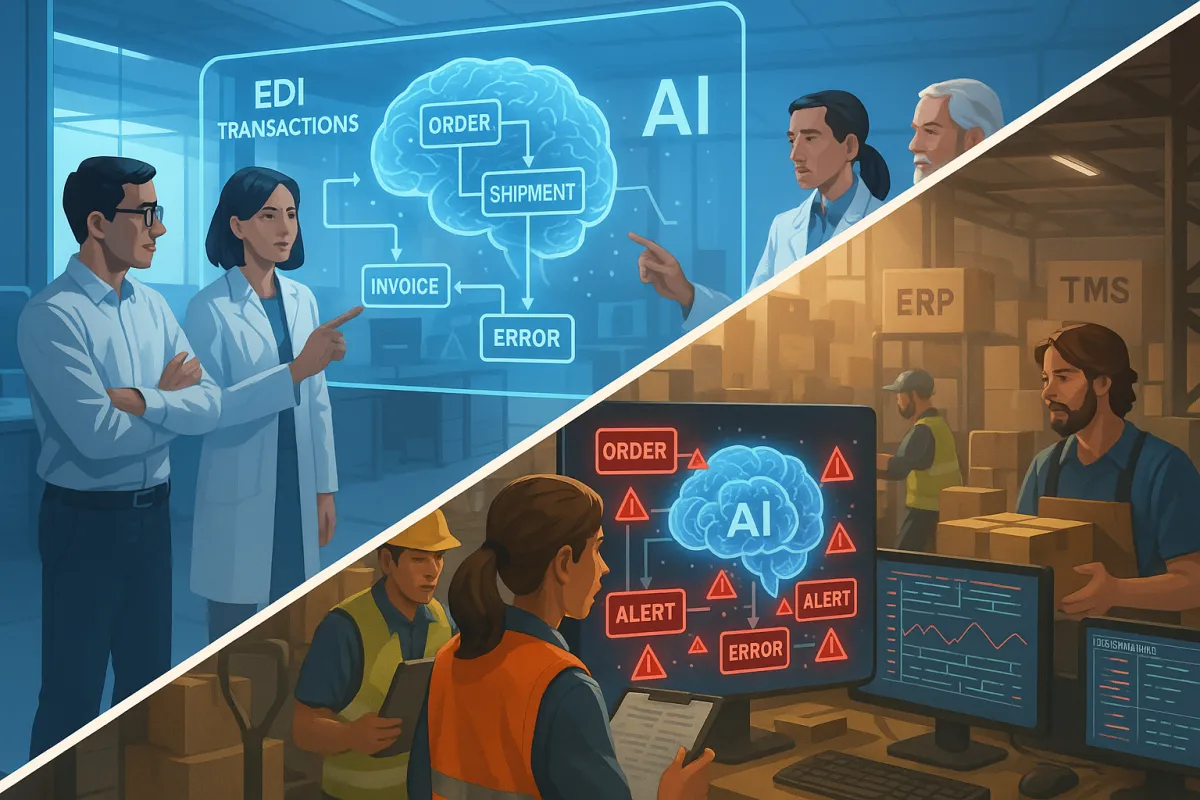

AI governance frameworks ensure even the most advanced models can't get away with bias propagation, data misuse, and ethical blind spots. When evaluating vendors like TrueCommerce, SPS Commerce, or newer players like Cargoson, you need to dig deeper than their AI feature announcements.

The core issues that destroy post-implementation ROI include explainability gaps in EDI decision-making, lack of human oversight in automated workflows, and missing audit trails for AI-driven data transformations. AI models work exactly as intended, but when implemented in unstructured, non-integrated environments with no common understanding or controls, even the highest quality AI systems produce results that appear inconsistent or unreliable.

Beyond Demo Performance: Technical Architecture Assessment

Stop getting seduced by impressive demonstrations. There's a massive gap between a demo and production-ready enterprise AI, and MIT's data backs this up: internal builds fail twice as often as vendor partnerships.

Your evaluation must include integration stress testing with your specific ERP, TMS, and WMS environments. TrueCommerce offers seamless integration with various business systems including ERP and WMS platforms, while transport-focused businesses should consider platforms like Cargoson that offer direct TMS integration alongside broader solutions like Cleo and OpenText.

Real production readiness means evaluating data flow documentation under peak transaction volumes, error handling across trading partner variations, and rollback capabilities when AI models drift. AI-driven analytics can now assess entire data populations in real time, detecting anomalies, policy deviations, or compliance lapses as they happen.

Integration Risk Assessment Framework

Ask potential vendors to document their model retraining triggers, version control processes, and change management protocols. The AI assistant must be fully integrated with existing iPaaS capabilities, enabling organizations to automate and orchestrate integrations between EDI, ERP systems, and other applications. Without this integration depth, you're buying a point solution that will create more data silos.

Governance and Explainability Requirements

The bottleneck for AI adoption in 2026 isn't the technology itself, it's trust. Your vendor evaluation must prioritize explainable AI capabilities that let your team understand why specific EDI transformations or partner recommendations were made.

ISO/IEC 42001 specifies requirements for establishing, implementing, maintaining, and continually improving an Artificial Intelligence Management System within organizations to establish policies and objectives for responsible AI development. This certification isn't optional for serious EDI AI implementations.

Evaluate vendors on their human-in-the-loop governance structures. Can business users override AI recommendations? Are decision points clearly documented? The difference between success and failure is whether you can demonstrate control with documented approvals, enforceable controls, and audit-ready evidence that holds up under scrutiny.

Test vendor transparency around model training data, bias detection capabilities, and algorithmic audit trails. There is considerable skepticism around AI due to misinformation and bias issues – ISO 42001 certification serves as proof of commitment to responsible AI use, making it easier to build customer trust.

Production Readiness Validation Beyond Marketing Claims

Your evaluation checklist must include monitoring and drift detection capabilities that go beyond basic performance metrics. MLOps provides the operational framework to ensure AI systems remain reliable, fair, and compliant throughout their lifecycle with automated deployment pipelines and built-in governance checks.

Demand proof of continuous model validation under real EDI transaction loads. How do vendors handle trading partner specification changes that could break AI-driven mappings? What happens when transaction volumes spike during peak seasons?

Success requires an implementation approach that combines vendor expertise with frontline ownership – vendors bring technical knowledge while business leaders bring lived experience to validate and refine the technology, creating a collaborative approach that lowers barriers and accelerates ROI.

Vendor Stability and Support Structure Assessment

Evaluate the vendor's track record with enterprise AI implementations beyond EDI. Many businesses opt for EDI managed services rather than keeping expertise in-house, as it ensures EDI is carried out correctly and seamlessly with active support for onboarding new partners quickly.

Weighted Scorecard Methodology for Consistent Comparison

Create a scoring framework that weights accountable AI capabilities at 40%, integration architecture at 30%, vendor stability at 20%, and cost considerations at 10%. This weighting reflects the true drivers of implementation success versus failure.

For accountable AI assessment, evaluate ISO/IEC 42001 certification status, explainable AI documentation, bias detection capabilities, and audit trail completeness. For established vendors like TrueCommerce and SPS Commerce, compare their AI governance maturity against emerging specialists like Cargoson that may offer more focused transport logistics AI capabilities.

Businesses switching from SPS Commerce can save up to 40% in costs, demonstrating real savings potential when choosing the right provider, but cost alone doesn't predict implementation success.

Reference check strategies should focus on post-go-live performance rather than initial deployment experiences. Ask existing customers about model drift incidents, support responsiveness during AI-related issues, and the vendor's track record for governance compliance updates.

Implementation Risk Prevention Strategy

Specialized vendor-led projects succeed approximately 67% of the time, while internal builds succeed only 33% – these GenAI failures are avoidable. Your prevention strategy must address the root causes of the 95% failure rate before selecting vendors.

Start with operational problem identification rather than AI feature exploration. Begin with pilot projects that address expensive operational problems with clearly defined success metrics – predictive maintenance, quality control automation, and process optimization provide measurable results that justify continued investment.

Plan integration barriers early. Legacy ERP systems may require middleware solutions that some vendors handle better than others. Transport and logistics solutions like Cargoson integrate directly with TMS platforms while retail-focused providers like SPS Commerce specialize in marketplace integrations, with 10% of logistics workflow automation possible through EDI.

Build vendor relationships for long-term AI evolution rather than point-in-time implementations. Companies must adopt governance frameworks to ensure ongoing compliance with evolving regulations, as non-compliance can lead to legal penalties, reputational harm, and operational disruptions.

The vendor landscape will continue evolving rapidly, but organizations that build systematic evaluation frameworks focused on accountable AI capabilities rather than demo performance will avoid joining the 95% failure statistic. Your procurement decision shapes not just EDI efficiency, but your organization's ability to trust and scale AI across supply chain operations.